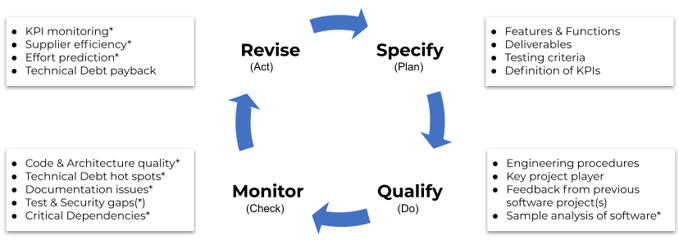

The qualified selection of software development service providers is of high strategic importance. Using a modified PDCA cycle (Plan-Do-Check-Act according to Deming), we address what purchasing can and should do as individual phases of the continuous supplier analysis (Specify-Qualify-Monitor-Revise).

As outlined in the first blog (Why Does the Procurement of Individual Software Need New Criteria for Supplier Qualification?) for the procurement of software produced according to specifications, the supplier qualification of software service providers is complex. For the procurement department, supplier selection is about having the relative certainty that the supplier can best develop and support the required software development for the company's own digitalization roadmap. In the case of software, this means that the code base can be adapted to new requirements for many years and at the launch rhythm of the company's own product upgrades with normal effort.

It is clear that ultimately many specialist departments are involved in a supplier relationship with a software service provider. However, we have focused our attention in this blog series on procurement in order to show how important specialized knowledge of software technology is in procurement as well. This is the only way to minimize risks for the company throughout the entire software lifecycle.

Identifying which software properties and parameters are the determining and descriptive variables for the quality and scalability of the software and software engineering is not trivial.

At Cape of Good Code we use a modified PDCA cycle (Plan-Do-Check-Act methodology according to Deming), which has proven itself in quality assurance in many industries for decades. In our case, these are the phases adapted for software development: Specify-Qualify-Monitor-Revise.

Figure 1: Cape of Good Code supplier analysis cycle of software service providers in close cooperation with development, QA, and procurement

Figure 1: Cape of Good Code supplier analysis cycle of software service providers in close cooperation with development, QA, and procurement

Cape of Good Code has developed a catalog of questions for each phase of this cycle, which recurs in each iteration, and which must be answered by each (potential) development partner. The comparative evaluation then determines which supplier would be the right one from a technological point of view (!). Of course, in each of these phases, there are is multitude of further questions and criteria from the specialist departments (e.g. creditworthiness, price, delivery time), which in themselves can also mean a no-go in each case.

Our analysis cycle should be used with every new software delivery (iteration), whereby the questions for the Qualify phase can be limited in the further Lifecycle to the examination of the qualification of the project team. In a years-long collaboration on a product such as software, which is under pressure to innovate, it is elementary to also repeatedly measure the relevant capabilities of the supplier during this time.

The focus of the Cape of Good Code supplier analysis is technology and software engineering. For the individual phases of the continuous supplier analysis, some topics are listed below as examples:

Specify: Definition of the requirements for the software by the client

- According to which test criteria should the results of an update be accepted?

- According to which KPIs should the quality of software deliveries and software engineering be evaluated?

- What level of detail can and should your own specifications satisfy? Do they have to be sufficiently fixed or are certain degrees of freedom allowed, which can only be shaped at the time of execution?

- For which software-dependent properties of the own development are the most changes expected during the development time?

- What is the earliest and what is the latest time to commission the software development (uniqueness of the specs vs. duration of the development)?

Qualify: Tool and interview-based qualification/selection of suppliers

- Are the right software tools being used to measure the quality of development results?

- What is the vendor's usual development efficiency?

- Is the project schedule reasonably synchronized with the own project schedule?

- What is the workload and skill level of the required developers?

- How are the offshore or nearshore development teams involved?

- Is the vendor able to bring in additional skilled developers during capacity peaks?

- What feedback can be obtained from previous customers of the supplier?

Monitor: Tool-based check of deliveries after each update

- How high is the technical debt of the software in terms of architecture and code quality?

- How costly is it to develop new features of the software?

- Is the know-how for this software still meaningfully distributed within the project team (i.e. are there any one-sided dependencies) and how useful is the documentation?

- How effectively are tests used to find bugs early?

- What technical debt needs to be prioritized and reduced with what projected effort?

Revise: Structured elimination of problems

- How to set up an improvement roadmap with KPIs on software and software engineering?

- How are budgets and responsibilities determined to bring the development effort for maintenance and technical debt to the standard of about 20%?

- At which relevant points should documentation and testing of the software be improved?

- How should the knowledge distribution in the project be optimized, young developers be trained in a structured way or senior developers be used more efficiently?

- Which one-sided dependencies of developers and suppliers might have to be reduced?

The following is a summary of some recommended KPIs that have proven relevant in our experience:

| KPI |

Definition |

Impact Fields |

Maintenance effort (percentage,

over time) |

Non-value-adding portion of development capacity (and its trend) for bug fixing, "firefighting" or for reducing technical debt |

costs, customer satisfaction |

Feature effort (percentage,

over time) |

Value-added share of total development effort (and its trend) for feature and innovation development. |

productivity, customer satisfaction |

| Feature Effort Effectiveness |

Excessive effort required to add new features to the code base. Values above the average indicate architecture and code problems and thus technical debt. |

cost, efficiency, time to market |

| Knowledge gaps (incl. development locations) |

Portions of the code and their distribution among developers for which there is little shared knowledge (either through collaboration or documentation). |

efficiency, dependencies on developers |

| Technical Debt Remediation Effort |

Effort forecasting for prioritized elimination of technical debt in the architecture and in the code |

costs, productivity |