The role of software assets in M&A transactions becomes increasingly important for the evaluation of business risks and the commercial or strategic value of the target. The tight time frame makes it obviously inevitable to apply a tool-assisted approach for the assessment of the technology status and the inherent risks. There is a growing understanding that widely used static code analysis tools can not deliver the necessary depth of insight for the investor compared to the analysis of the history of the code and its changes.

In this blog we are going to elaborate why identifying the relevant code and potentially risky hotspots needing improvement has to consider more than just code quality and hotspots of changes. The next blog is focusing on the human factor and why the identification of key developers needs to look beyond knowledge islands.

Introduction

Just recently some publications presented a tool-based approach as well and delivered some valuable insights:

- Code is only worth improving at relevant points. It is neither possible (due to budget and capacity restrictions), nor necessary to improve “quality” across the whole code base.

- Software systems are often too complicated to base the analysis just on the latest code snapshot and to derive reliable conclusions.

- The human factor of software development, like the identification of key developers, has to be part of the tool-based risk analysis and potential risk mitigation concepts. It seems great to have a few star developers, but when they become knowledge islands, i.e. contributors who exclusively create and maintain parts of the code, they can easily become a serious risk instead of being an asset.

- Software Due Diligence needs to be based on information derived from the code-base itself and the historical data from the applied development tools like a code repository, instead of functional checks, code reviews, and a couple of interviews only.

However, also the analysis of historical data of the code has some pitfalls and potentially leads to simple mistakes you have to be aware of when drawing conclusions. We will post a series of blog articles about critical aspects when executing a tool-based Software Due Diligence.

We are going to explain how we at Cape of Good Code avoid these pitfalls by using our proprietary DETANGLE Analysis Suite as part of our Software Due Diligence methodology.

Hotspots — From KPIs to Code AND Architecture Quality

At first sight code areas that change often look like good candidates to be analyzed with priority. Thus, analyzing the history of changes (sometimes called “behavioural code analysis”) and investigating the code quality of frequently changing code looks like being the right approach instead of static code analysis. But doesn’t it sound like a hen-and-egg problem: does bad code quality cause the high change rates or do all the changes lead to bad code quality? It is hard to tell the difference. Furthermore, do many changes originate from developing new features or from maintenance work like bug-fixing?

So what is a better way to go? Measuring the maintenance effort of code areas would allow revealing the potential impact of bad code quality. In general, we suggest using business relevant KPIs as the starting point to identify risky hotspots of the code and perform a more accurate root cause analysis.

One important KPI is the Maintenance Effort Ratio. It shows the share of maintenance effort related to the total development effort. It measures the maintenance effort by intelligently weighting and aggregating changed lines of code over the code repository and associating them to bugs captured by issue trackers.

The Maintenance Effort Ratio becomes critical (thus a risk), when it passes a certain threshold ranging from 20 to 40%, as this development capacity is certainly not spent on the value-add development of new features. This can be drilled down to select code areas.

For the further investigation of code quality metrics like cognitive complexity, code duplications and potential bugs, static code analysis tools can be integrated into our DETANGLE Platform to use these metrics as well. As a first conclusion all areas of bad code quality BUT low maintenance effort can be ignored.

Additionally, in reality by far not all maintenance hotspots are related to bad code quality. Further analysis is needed. From our experience architecture quality is much more important than code quality when we talk about high-quality software with a long useful life-cycle. Our DETANGLE architecture quality metrics of feature coupling and cohesion are often correlated to the occurrence of high maintenance efforts.

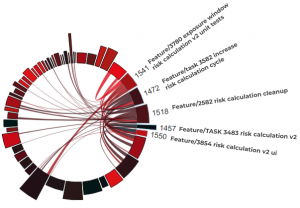

Figure 1: Local and global coupling of some product features.

Figure 1: Local and global coupling of some product features.

The figure visualizes feature coupling of the German Corona-Warn- App across the code. The named arcs represent risk calculation features (v1.9). These ones are locally coupled between each other as shown by their connections. Besides, these features also show high global coupling unrelated features as well.

Feature Effort Effectiveness

Therefore, we have introduced another KPI capturing the ease of adding new features. We named it the Feature Effort Effectiveness Index. The architecture quality metrics of feature coupling and cohesion show with a high degree of accuracy, that bad architecture leads to lower Feature Effort Effectiveness, meaning that adding new features absorbs more capacity and more budget than it should.

Conclusion

Software Due Diligence benefits from a tool-based approach with clearly defined KPIs. It helps to achieve deep and plausible insight in risks for the M&A transaction in a rather short time. But these tools have to be used with sensitivity and experience in order to avoid false or incomplete conclusions.

For instance, parts of the code might be less of a maintenance hotspot than code quality indicates only. In contrast, architecture quality is a more reliable root cause of feature development hotspots. Knowing the main pitfalls and being able to avoid them in a Due Diligence investigation is essential to get the right conclusions from a tool-based approach.